# dtypes: float64(2), int64(2), object(3)įrom this, you can see that the age, bmi, and children features are numeric, and that the charges target variable is also numeric. Let’s confirm that the numeric features are in fact stored as numeric data types and whether or not any missing data exists in the dataset. While there are ways to convert categorical data to work with numeric variables, that’s outside the scope of this tutorial.īefore going any further, let’s dive into the dataset a little further. This is because regression can only be completed on numeric variables. You’ll notice I specified numeric variables here. Specifically, you’ll learn how to explore how the numeric variables from the features impact the charges made by a client. charges – the total charges paid by the clientįor this tutorial, you’ll be exploring the relationship between the first six variables and the charges variable.region – the region that the client lives in.smoker – whether the client smokes or not.children – the number of children the client has.bmi – the body mass index of the client.# 4 32 male 28.880 0 no northwest 3866.85520īy printing out the first five rows of the dataset, you can see that the dataset has seven columns: # age sex bmi children smoker region charges To explore the data, let’s load the dataset as a Pandas DataFrame and print out the first five rows using the. You can find the dataset on the datagy Github page. The dataset that you’ll be using to implement your first linear regression model in Python is a well-known insurance dataset. Let’s get started with learning how to implement linear regression in Python using Scikit-Learn! Loading a Sample Dataset The more linear a relationship, the more accurately the line of best fit will describe a relationship.Ī sample line of best fit being applied to a set of data In the image below, you can see the line of best fit being applied to some data.

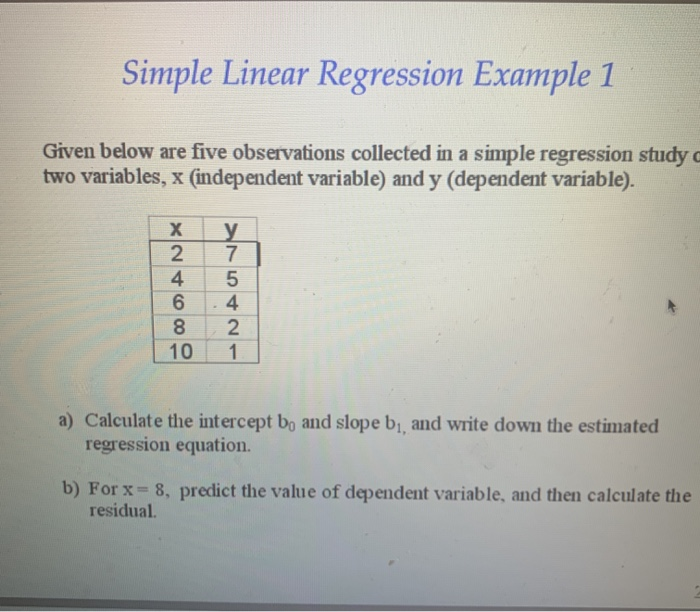

Where the weight and bias of each independent variable influence the resulting dependent variable. This can often be modeled as shown below: In these cases, there will be multiple independent variables influencing the dependent variable. In many cases, our models won’t actually be able to be predicted by a single independent variable. This relationship is referred to as a univariate linear regression because there is only a single independent variable. In machine learning, m is often referred to as the weight of a relationship and b is referred to as the bias. You may recall from high-school math that the equation for a linear relationship is: y = m(x) + b. Put simply, linear regression attempts to predict the value of one variable, based on the value of another (or multiple other variables). Linear regression attempts to model the relationship between two (or more) variables by fitting a straight line to the data. Linear regression is a simple and common type of predictive analysis. Multivariate Linear Regression in Scikit-Learn.Building a Linear Regression Model Using Scikit-Learn.Hope you found my answer helpful or at least interesting. This is a very extensive subject and there are still lots of different opinions out there, so I encourage other people to complement my answer with what they think. If we used the MAD (mean absolute deviation) instead of the standard deviation to calculate both r and the regression line, then the line, as well as r as a metric of its effectiveness, would be more realistic, and we would not even need to square r at all. Here is a paper about that topic presented at the British Educational Research Association Annual Conference in 2004. In the case of r, it is calculated using the Standard Deviation, which itself is a statistic that has been long put to doubt because it squares numbers just to remove the sign and then takes a square root AFTER having added those numbers, which resembles more an Euclidean distance than a good dispersion statistic (it introduces an error to the result that is never fully removed). IMHO, neither r o r^2 are the best for this. The short answer is this: In the case of the Least Squares Regression Line, according to traditional statistics literature, the metric you're looking for is r^2.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed